Infinite Jest belongs in the techno-optimist canon

A16Z Culture's most controversial book recommendation so far

Hello long-standing substack readers! It’s been a while since I’ve written here, as 5 months ago I joined a16z and took over the Substack there, where I’ve been writing. If you don’t subscribe to the a16z newsletter, you should!

Now that the a16z newsletter is cooking nicely - we’re publishing every day, it’s growing and maturing into a real publication - one of my fun side projects has been growing its “Culture” section. For anyone who follows my personal substack, you probably know that this is the stuff I most enjoy writing about. We host guest pieces on culture and criticism, book reviews and recommendations, and other pieces that aren’t necessarily business or tech focused, but try to capture what it’s like to be in tech right now in this intense moment.

Here’s what I sent out today, I hope you enjoy it:

A16Z is an optimistic firm. We are excited for the future, we’re excited about technology, and about how people will achieve greatness in their lives.

So it might surprise you, if you know the book by reputation, that I think Infinite Jest belongs in the techno-optimist canon.1 Infinite Jest - published 30 years ago this month - does not have a reputation of being a “techno-optimist” book. In fact, it’s usually cited as a dystopian book; where advancing technology has brought out the worst of people’s anxieties and addictions.

But Infinite Jest, when you actually read it, is a joyful book. It’s a book about people; it’s about suffering and perseverance and the million points of light that make up a human. It’s a book about seriousness: what it means to surrender to a purpose that’s bigger than you. It’s a book for today’s solo creator, founder, or IC trying to “make it”; it’s a book for talented, but frustrated people; for founders, for posters, for anyone who looks ridiculous but perseveres.

To celebrate its 30th anniversary, I actually read it 2 (I only got through 200-ish pages in my first attempt years ago, which is pretty typical, but you need to struggle through them; it’s part of the experience), and my overwhelming impression after putting it down was, ‘Everyone in tech needs to read this book right now.’ Infinite Jest belongs in the startup and founder canon because it is among the great novels written about struggling a lot, and looking painfully ridiculous. Which, the book argues persuasively, are preconditions for seriousness; which is itself a precondition for optimism:

To be a real optimist, you need to be serious: you need to be a part of something greater than yourself.

To be serious, you have to be externally focused (striving for something external, in service of others, in surrender to a higher mission), not caught up in your own head.

To achieve true external focus, you probably need to struggle a lot. And it won’t be the romantic kind of struggling either; you are going to look ridiculous.

These are obvious truths, but they’re hard to see, even when they stare you in the face. And Infinite Jest’s magic comes from its embrace of the ridiculous, which helps you see and accept these lessons: in the moment as a reader, and one day in our own story.

“What’s water?”

Light spoilers ahead; my intent here is to get you to want to read the book, not ruin it for you, but proceed accordingly.

There are four plot strands in the book:

1) The one the book is famous for, which is “The Entertainment” 3: a piece of content so compelling that anyone who watches forgets to eat or drink, and they go insane if it’s taken away from them.

If you’ve heard of anything in the book, it’s probably this theme, as an emblem for “post-scarcity problems.” Infinite Jest is set in a world of abundance, more-or-less (they’ve figured out energy, albeit with some side effects). The principal plot element of The Entertainment helps frame both the abstract world of “post-scarcity” and the present very real phenomenon of addiction. (Most of the characters are battling demons of some sort, mostly drug addictions of various kinds.)

The Entertainment is of great interest to a group of violent Quebec separatists, who want to use it as a terrorist weapon. This particular plot strand helps organize the book intellectually, as a vessel for debate over post-scarcity problems and timeless values: about freedom and individual choice, about individual versus collective rights, and about American exceptionalism.

2) and 3) are the principal plot narratives of the book, which form a classic “upstairs / downstairs” setup. One plotline follows the students at a youth tennis academy, who are all hard-striving tennis protegés (and who have their issues; e.g. smoking too much weed), who despite their troubles do achieve moments of purpose and transcendence in their struggle to make it to “the show” i.e. the professional tennis circuit.4

Meanwhile, down the hill from the tennis academy, there’s a halfway house full of people who have had incredibly messed up lives, and whose own journey of suffering and meaning is largely told through their ongoing participation in AA meetings. The AA backdrop tells the same story as the Tennis Academy, of characters suffering from the bleakest of circumstances.

What the tennis players and the halfway house residents have in common is the internal battles they’re fighting. And it’s in the intense moments of fighting for sobriety that you experience the pure gravitas of their everyday heroism. This is where the characters (and, you, the reader) gain such externally oriented empathy, focus and clarity around what it means to be serious - and, accordingly, where a profound and re-born optimism springs forth.

4) Finally, there’s a fourth plot strand which I will not tell you about, to keep the mystery of the book intact.

With this introduction to the basic tenets of the book: why is it worth reading?

DFW’s great gift is in helping us actually see what is right in front of us, and yet hard to describe - like the fish who asks the other fish, “What’s water?” (best known from his 2005 Kenyon College commencement speech, but originally from Infinite Jest, delivered by a biker gang after an AA meeting.)

One way we achieve this escape into the sublime, where we can actually see what’s in front of us, is by diving into absurd side plots, jargonny technical manuals or slangy dialogue, or other framing devices that help us understand the main plot better despite their logical irrelevance. In his Forward to the 20th Anniversary edition of IJ, Tom Boswell writes: “Most great prose writers make the real world seem realer - that’s why we read great prose writers. But Wallace does something weirder, something more astounding: even when you’re not reading him, he trains you to study the real world through the lens of his prose.

The way DFW employs “ridiculousness” is for two purposes. First, because the reader of the book is initially going to be guarded, and defensive, against seeing our own struggle in these characters. By placing some of the book’s most anguishing and pivotal scenes in ridiculous and/or gross circumstances (a critical moment in the plot happens in very funny and disgusting manner in a public bathroom stall), he brings our guard down: when we laugh along, we’re accepting something about ourselves. And second, it helps us accept that our own struggle, our own suffering, can often itself look ridiculous; and that is okay; it is part of being human.

The real world, like Infinite Jest, is full of these lessons:

Not only is this thematically very Infinite Jest-y, but there is one particular Cortisol-spiking Snatch in the book that is critical to the plot, and equally ridiculous as this one

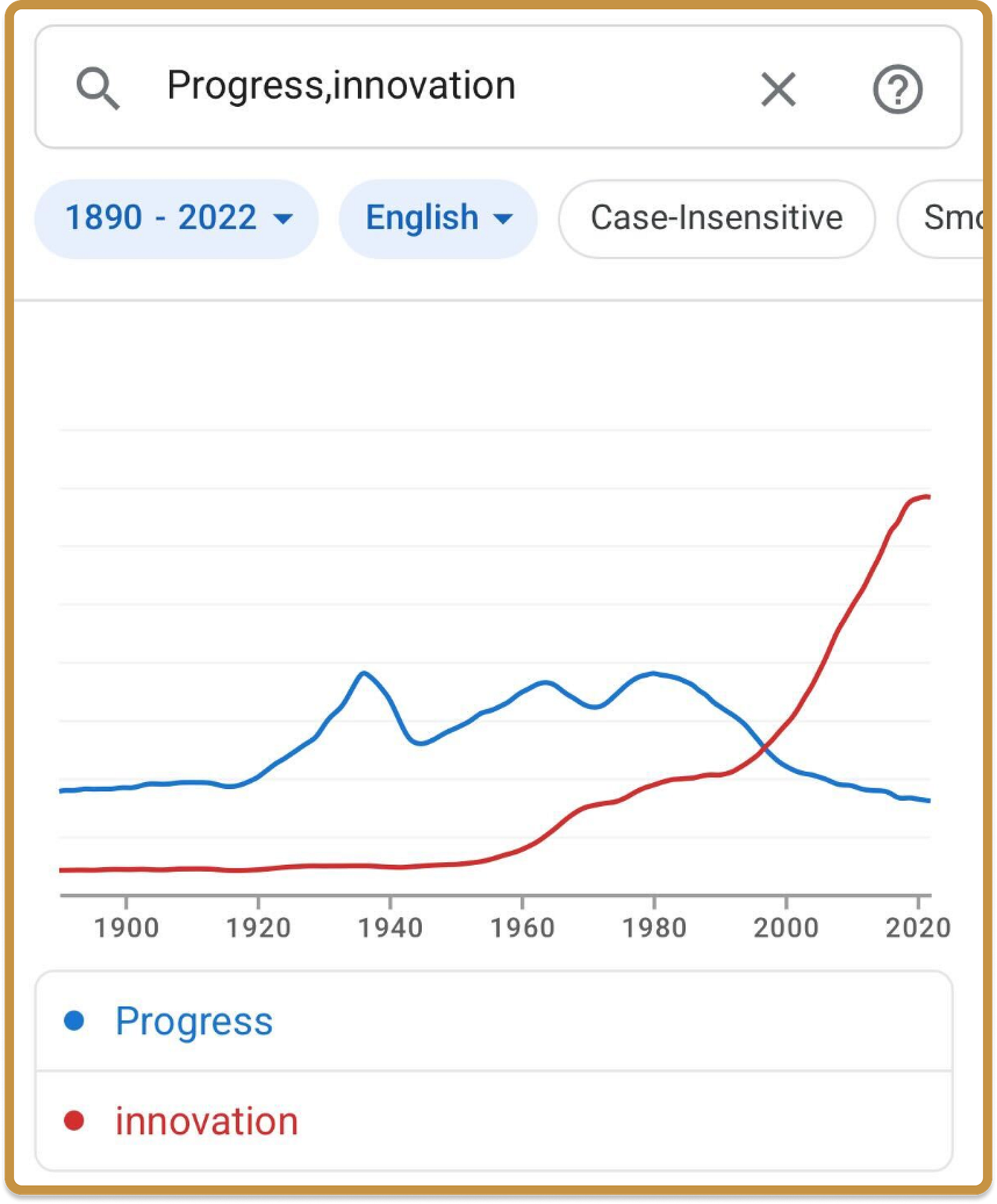

The current Clavicular / Looksmaxxing / World War Mog storyline is a real-world Infinite Jest sideplot 5, where people willingly breaking their jaws in pursuit of looksmaxxing tell a story about transcendence through suffering, and about what “seriousness” is. It’s not like this is what actual suffering is - that’s not the point I’m making here, similar to how DFW’s halfway house residents who are genuinely suffering see their struggle sublimely articulated by a tennis coach up the hill.6 And it’s not because looksmaxxing as practiced here is a good goal; it’s a ridiculous goal, but it’s taken very seriously.

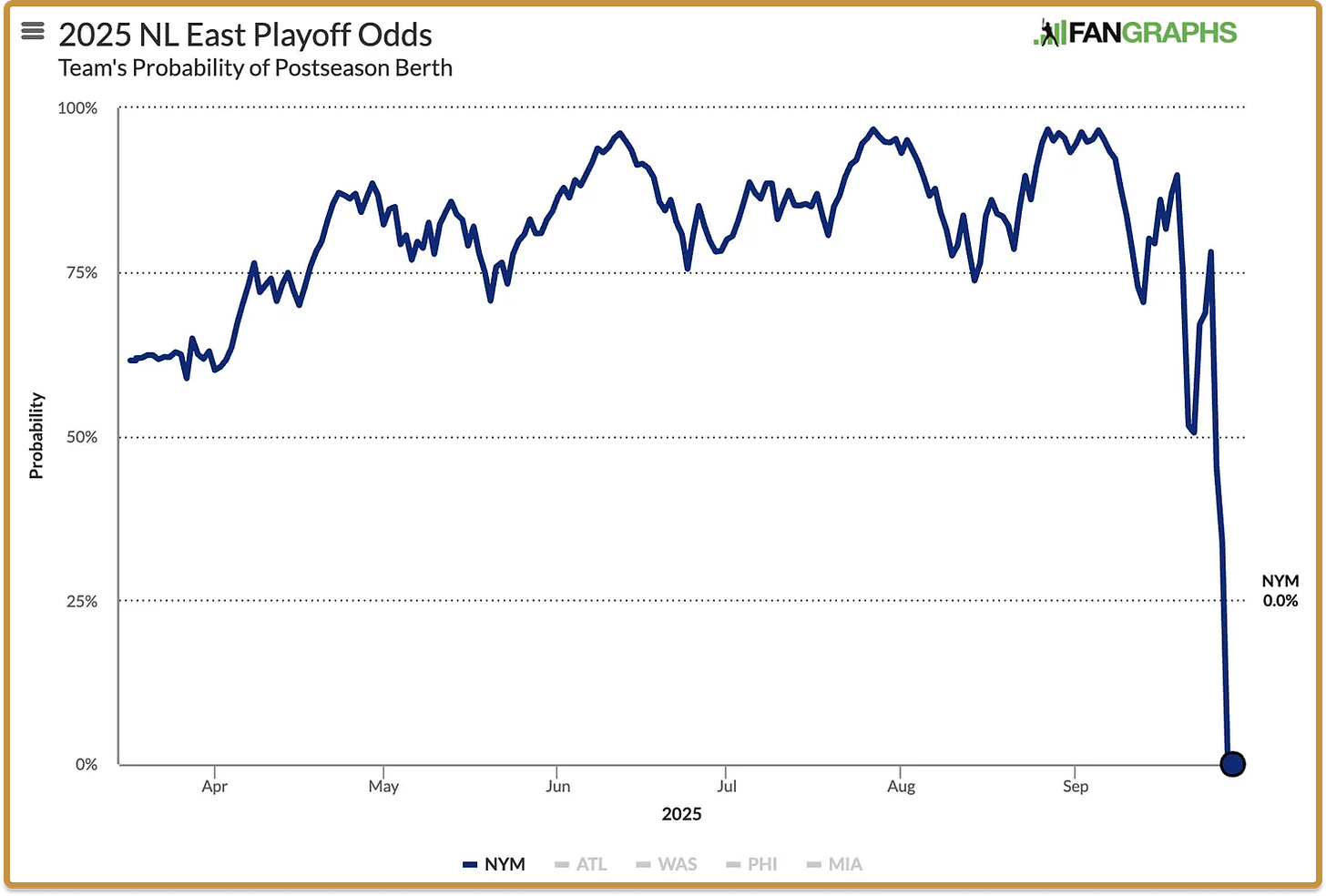

It’s a useful mirror for a tech community that has gone a cultural 180 over the last decade: from the decadence of the late 2010s and the bewilderment of Covid-era tech largesse, to being suddenly dropped into a race for AGI and into the mission of American Dynamism. Many of us in tech went through an experience like Schtitt’s kids7, who needed to get back to being a little fish, and part of a story much bigger than themselves. Back into sweating the details, frantically searching for ways through new, big problems that are suddenly outside of our heads again, with real stakes.8

Infinite Jestermaxxing: “I hope suffering happens to you.”

All great novels have a scene where the main character confronts the devil.9 These climatic scenes bring the moral thrust of the book to a fine point, where all of the lessons of the previous pages come together to express the author’s moral antithesis. In Infinite Jest, that scene takes place at a group therapy session, lionizing a particularly horrifying idea of self-care. It is the antithesis of AA; it foresaw modern “wellness” culture with clear eyes.

A16Z’s own Katherine Boyle wrote an essay a few years back, “On Seriousness”, making the point that “Seriousness” means believing in a project greater than oneself. David Foster Wallace’s characters form into serious tennis players when they learn to really “see” the lines of the court, the game, and their life’s pursuit as something greater than themself. Clavicular and the jestermaxxers are absurd characters on our current timeline that somehow, illogically but compellingly, help us “see” our own struggle and reincarnation10. They are looking outward, they are struggling for something, even if that something is ridiculous; arguably because it is ridiculous.

Jensen Huang gave the best version of this:

“One of my great advantages is that I have very low expectations. Most of the Stanford graduates I meet have very high expectations, and you deserve to have high expectations, because you came from a great school. You were very successful; you’re top of your class;

obviously you were able to pay for tuition. And you’re graduating from one of the finest institutions on the planet. You’re surrounded by other kids that are just incredible. You naturally have very high expectations.

People with very high expectations have very low resilience. And unfortunately, resilience matters in success. I don’t know how to teach it to you, except for, I hope suffering happens to you.”

Hence, I’ll restate my case to you why Infinite Jest belongs in the Techno-Optimist canon: because optimism requires seriousness, and seriousness looks painfully ridiculous, in the moments where it counts. The most serious founders often look the most silly! Being a founder means suffering a lot, and looking ridiculous a lot. Being serious about something means being both totally focused on an external, ambitious, hard-to-achieve goal while also flailing around as a complete beginner.

At A16Z, our tennis coach up the hill is this guy Ben Horowitz, who wrote a book called The Hard Thing about Hard Things.11 If you go find your copy, turn to Page 61, and he’ll tell you about the Struggle:

The Struggle is when you wonder why you started the company in the first place.

The Struggle is when people ask why you don’t quit and you don’t know the answer.

The Struggle is when your employees think you are lying and you think they might be right.

The Struggle is when food loses its taste.

David Foster Wallace’s characters certainly know the struggle. Reading fiction is an incredible gift, for that reason. You can learn their lessons from experiencing their pain, and I’m not saying it’s a literal substitute for the pain itself but it’ll make you a whole lot more prepared for when it comes around.12

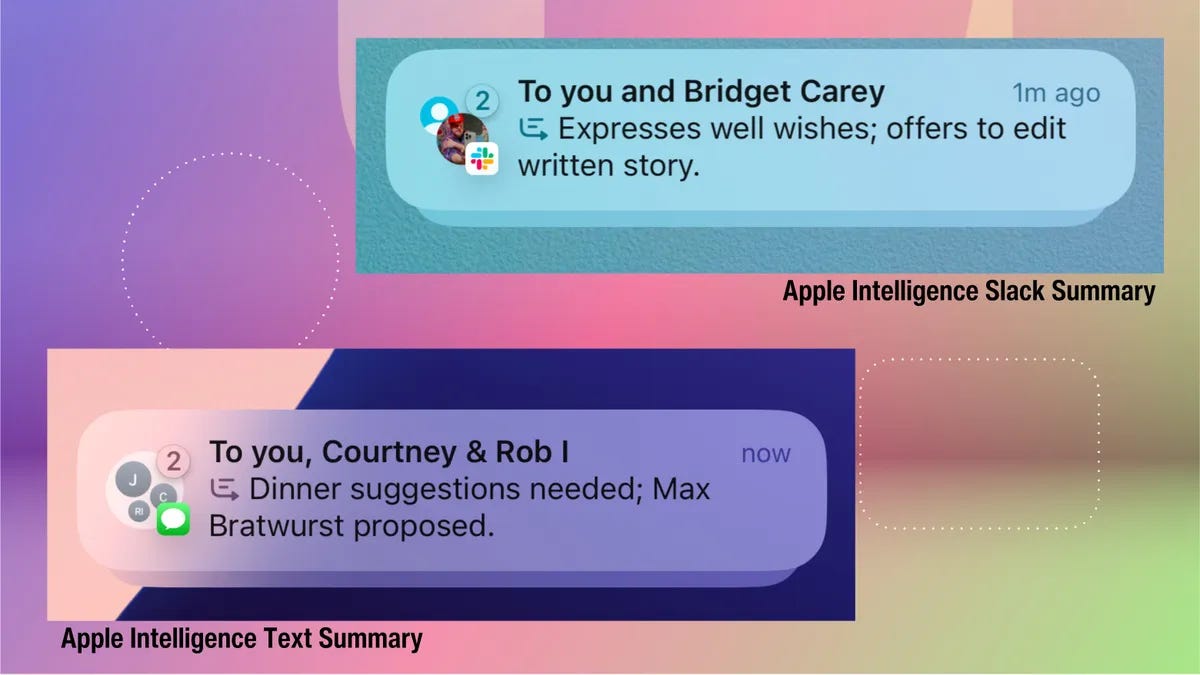

I wrote the other week that the important test of our humanity in the next several years is what we do to help others across the threshold, into a world where everyone is a beginner again, everyone has a blank slate opportunity to be serious about what they want to do with all of this new agency and freedom to do things - but also may lose their jobs, their self image; they will look ridiculous. They will know frustration.

The human greatness that’s to come is going to come from the struggle; it’s how individual people are going to get oriented within the new lines of possibility, like the lines on one of DFW’s tennis courts, and find their own version of greatness.

If you want to enter the future as a prepared, optimistic technologist: pick up Infinite Jest. It’ll make you laugh, it’ll make you grateful, it’ll make you joyful. And, yes, reading it will be an experience in pain and suffering. I wish you ample doses of it.

See https://a16z.com/the-techno-optimist-manifesto/ for more on Techno Optimism.

In a funny bit of contrast, I also re-read The Hard Thing About Hard Things by our own Ben Horowitz at the same time, which was a funny juxtaposition but really worked.

A piece of content so compelling that it is used as a terrorist weapon by Quebec separatists (a), in the Year of the Depend Adult Undergarment (b)

A. Les Assassins des Fauteuils Rollants, known in English as the Wheelchair Assassins, which as an aside demonstrates a recurring joke that surely goes over >90% of the readers’ heads, which is that a great many of the Quebecois French words and idioms are misspelled: e.g. “Rollants” should be “Roulants”

B. Infinite Jest takes place in a world where calendar years have paid sponsors, similar to college football playoff games. Hence, “Year of the Trial-Sized Adult Dove Bar”, e.g.The elder philosopher drill coach at the academy, Gerhardt Schtitt, frames the book’s concept of virtue (as relayed through his colleague), about how the academy helps those kids not completely lose their minds under the pressure of competition and fame:

“These kids are here to learn to see. Schtitt’s thing is self-transcendence through pain. These kids, they’re here to get lost in something bigger than them. To have it stay the way it was when they started, the game as something bigger, at first. Then they show talent, start winning, become big fish in their ponds out there in their hometowns, stop being able to get lost inside the game and see. [Messes] with a junior’s head, talent. They pay top dollar to come here and go back to being little fish and to get savaged and feel small and see and develop.”

Schtitt’s philosophy can be found in greater detail in an early section of the book, where he goes on an exhausting and absolutely disorienting treatise on “seeing within lines” of a tennis court that makes no sense until you’re most of the way through the book, at which point you will try to find it but fail to find the relevant chapter after at least 5 minutes of flipping through pages, for there is no table of context nor chapter numbers in this >1000 page book.

Really he’s more of a Cervantes character, but anyway;

Or how you, the reader, suffer through pages and pages of detail you do not want to read but you know you have to, because critical plot elements will be buried in what is otherwise a 1000-word footnote on Boston-area drug slang taxonomy - the technique is brutally effective.

See note 4.

This newsletter is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. Furthermore, this content is not investment advice, nor is it intended for use by any investors or prospective investors in any a16z funds. This newsletter may link to other websites or contain other information obtained from third-party sources - a16z has not independently verified nor makes any representations about the current or enduring accuracy of such information. If this content includes third-party advertisements, a16z has not reviewed such advertisements and does not endorse any advertising content or related companies contained therein. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by a16z; visit https://a16z.com/investment-list/ for a full list of investments. Other important information can be found at a16z.com/disclosures. You’re receiving this newsletter since you opted in earlier; if you would like to opt out of future newsletters you may unsubscribe immediately.

Infinite Jest’s devil construct, like many other structural elements of the book, draws heavily from Dostoyevsky’s The Brothers Karamazov (which is my favorite book of all time.) Another major similarity is that the Incandenza brothers (Orin, Hal, Mario) are modeled fairly explicitly on Dimitri, Ivan and Alyosha Karamazov: Orin the sensualist, Hal the literate skeptic, Mario the Christ-like figure; with dad being, you know, the dad.

Clavicular was mid jestergooning when a group of Foids came and spiked his Cortisol levels.

See note 1.

“Since it is so likely that [children] will meet cruel enemies, let them at least have heard of brave knights and heroic courage. - CS Lewis